llms.txt: How to Control What AI Says About Your Business

Jack Amin

Digital Marketing & AI Automation Specialist

Quick Answer

llms.txt is a proposed Markdown-based standard that acts as a curated table of contents for AI models. It helps AI systems like ChatGPT and Perplexity navigate your site's most important content cleanly, bypassing HTML noise. Combined with a strategic robots.txt, it allows businesses to guide AI citation while controlling training access.

llms.txt is a proposed web standard that gives businesses a way to guide what AI systems like ChatGPT, Perplexity, and Claude see when they visit your website. Unlike robots.txt, which tells search engine crawlers what not to access, llms.txt tells AI models what your most important content actually is — formatted in Markdown so language models can read it cleanly without parsing complex HTML, JavaScript, or navigation clutter. For Australian businesses already investing in AEO (Answer Engine Optimisation), llms.txt represents a practical next step: a lightweight file you can add to your site today that curates which pages AI systems prioritise when answering questions about your brand, products, or services.

What Is llms.txt and Where Did It Come From?

The llms.txt specification was proposed by Jeremy Howard, an Australian technologist and founder of fast.ai, in September 2024. His observation was straightforward: large language models increasingly rely on website content to answer user questions, but they face two fundamental problems. First, context windows are too small to process an entire website at once. Second, converting complex HTML pages into clean text that a model can understand is unreliable — navigation menus, JavaScript-rendered content, ads, and sidebar widgets all create noise.

Howard's solution was a simple Markdown file placed at the root of your domain (yoursite.com/llms.txt) that acts as a curated table of contents for AI. Instead of letting models guess which pages matter, you tell them directly.

The specification has since been adopted by companies including Anthropic, Cloudflare, Stripe, Zapier, Perplexity, Vercel, and Coinbase. WordPress plugin Yoast SEO now supports one-click llms.txt generation, and platforms like Webflow provide upload support. As of early 2026, over 780 websites have published llms.txt files, though this remains a small fraction of the web.

How llms.txt Actually Works

An llms.txt file follows a specific Markdown structure. At minimum, it includes an H1 heading with your business name, a blockquote summary describing what you do, and then organised sections with links to your key pages. Each link can include a brief description to give AI systems context about what the page contains.

Here is what a basic llms.txt file looks like for a service business:

markdown# Acme Web Solutions

> Acme Web Solutions is an Australian web development and digital marketing agency

> based in Sydney, specialising in WordPress, SEO, AEO, and marketing automation.

## Services

- [Web Development](https://acme.com.au/services/web-development): Custom WordPress and headless CMS builds for Australian businesses

- [SEO & AEO](https://acme.com.au/services/seo-aeo): Search engine and answer engine optimisation services

- [Marketing Automation](https://acme.com.au/services/marketing-automation): Dynamics 365 and HubSpot implementation

## About

- [About Us](https://acme.com.au/about): Our team, approach, and 10+ years of experience

- [Case Studies](https://acme.com.au/case-studies): Client results across eCommerce, training, and professional services

## Resources

- [Blog](https://acme.com.au/blog): Guides on SEO, AEO, web development, and digital strategy

- [FAQ](https://acme.com.au/faq): Common questions about our services, pricing, and process

The specification also supports a companion file called llms-full.txt, which contains your complete documentation or key page content compiled into a single Markdown file. Where llms.txt provides the map, llms-full.txt provides the entire library. AI coding assistants and research tools tend to use the full version more heavily — data from Mintlify suggests AI agents visit llms-full.txt roughly twice as often as the summary file.

What llms.txt Is Not

Before diving into implementation, it is important to understand what llms.txt does not do, because there is significant misinformation circulating about this standard.

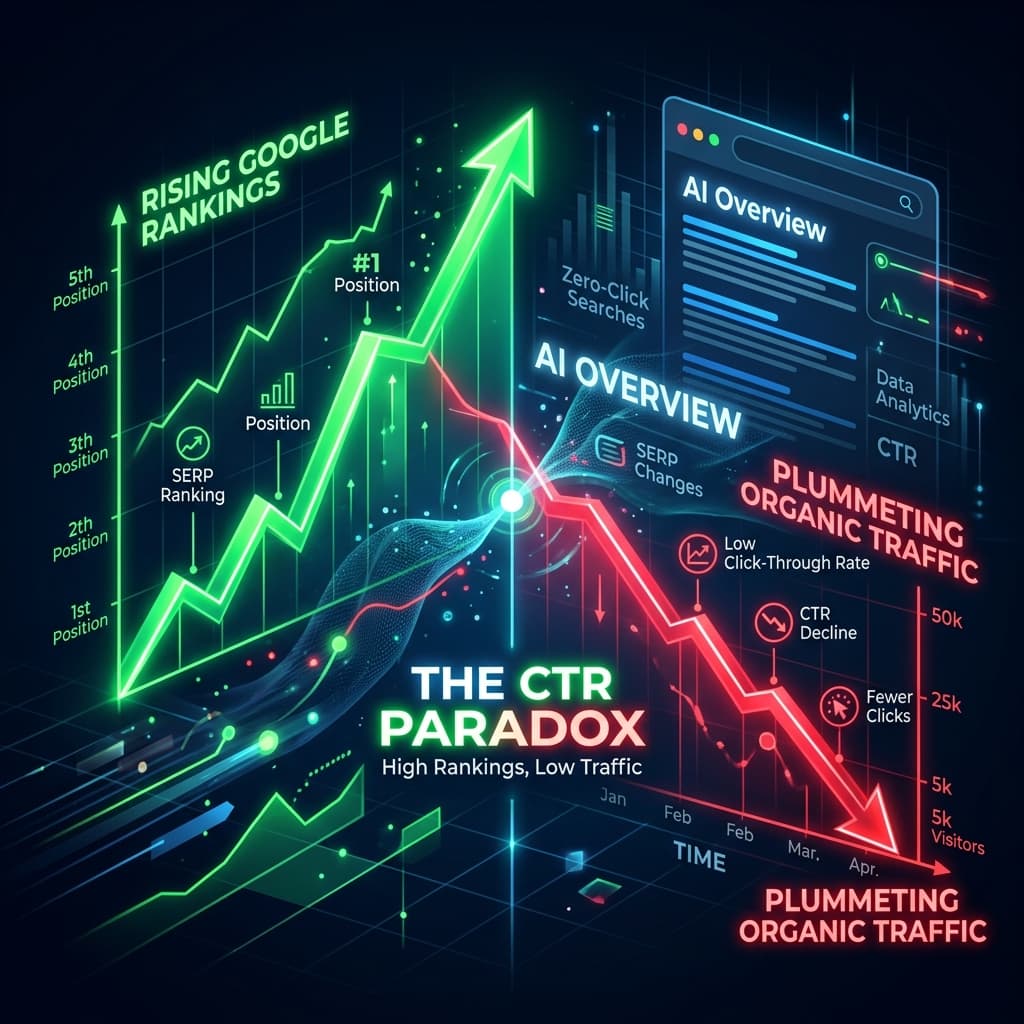

It is not a magic SEO button. Google's John Mueller stated publicly in 2025 that Google does not use llms.txt, and there is no evidence it influences Google AI Overviews or traditional search rankings. When Perplexity or ChatGPT cite your website, they do so because your page answered the query well — not because you had an llms.txt file pointing to it.

It is not a blocking mechanism. Unlike robots.txt, llms.txt does not prevent AI crawlers from accessing your content. It is a discovery aid, not an access control. Think of it as closer to an XML sitemap than a firewall.

It is not an official web standard. No major AI company — including OpenAI, Google, or Anthropic — has officially announced they follow llms.txt during inference. Server log analysis across multiple studies shows that AI-specific crawlers (GPTBot, ClaudeBot, PerplexityBot) rarely request the file. Adoption is still in its early stages.

However, this does not mean it is without value. The story is more nuanced than the sceptics suggest.

Why Implement llms.txt Anyway?

If AI crawlers are not actively using the file yet, why bother? There are several practical reasons.

Future-proofing with minimal effort. The file takes 30 minutes to create and costs nothing to maintain. The AI search landscape changes rapidly — when (not if) major platforms begin supporting llms.txt, sites that already have one will benefit immediately. Companies like Anthropic publishing their own llms.txt file signals the direction of travel.

Controlling the AI narrative. Even without direct crawler support, llms.txt forces you to think critically about which content represents your business. This exercise alone improves your broader AEO strategy. When you identify the 15–20 pages that best define your brand, you know exactly where to focus your schema markup, content quality, and internal linking efforts.

Developer and agent use cases. Where llms.txt already delivers measurable value is in developer-facing contexts. AI coding assistants like Cursor and GitHub Copilot use llms.txt to understand API documentation more accurately. LangChain's internal benchmarks found that agents using llms.txt to navigate documentation significantly outperformed those relying on traditional vector search or raw context stuffing.

AI-assisted research. Technical buyers increasingly evaluate vendors by asking AI tools to summarise a company's offerings. If your llms.txt clearly surfaces pricing pages, service descriptions, and case studies, you make it easier for AI-assisted researchers to find accurate information about your business — even if the mechanism is indirect.

The Bigger Picture: Your AI Visibility Stack

llms.txt does not work in isolation. It is one component of a broader AI visibility strategy that Australian businesses should be building right now. Here is how the key files work together:

robots.txt controls which crawlers can access your site. This is where you decide whether to allow or block AI training crawlers (like GPTBot and ClaudeBot) versus AI search crawlers (like ChatGPT-User and PerplexityBot). The distinction matters: training crawlers absorb your content into AI models without attribution, while search crawlers fetch content in real time and typically cite your page with a link back.

XML sitemap tells search engines about every page on your site. It is comprehensive — potentially thousands of URLs — designed for full crawling and indexation.

Schema markup (structured data) helps both search engines and AI systems understand what your content represents: products, services, FAQs, reviews, courses, organisations.

llms.txt curates your most important content specifically for AI consumption. Where your sitemap might include 500 pages, your llms.txt should highlight 15–50 of the pages that best represent your business.

Answer-first content (your AEO-optimised pages) provides the actual substance that AI systems cite. No file can compensate for content that fails to directly answer user questions.

Together, these create a layered system: robots.txt manages access, sitemaps enable discovery, schema provides structure, llms.txt offers curation, and your content delivers the answers.

Training Crawlers vs Citation Crawlers: A Critical Distinction

One of the most important decisions Australian businesses face right now is understanding the difference between AI training crawlers and AI citation crawlers, because your robots.txt strategy should treat them differently.

Training crawlers scrape your content to build or refine AI models. Your content gets absorbed into the model's knowledge, but you receive no attribution, no link, and no traffic. Key training crawlers include GPTBot (OpenAI), ClaudeBot (Anthropic), Google-Extended, CCBot (Common Crawl), and Bytespider (ByteDance).

Citation crawlers fetch your content in real time to answer a specific user query. They typically cite your page and link back to it, driving referral traffic. Key citation crawlers include ChatGPT-User (OpenAI), OAI-SearchBot (OpenAI), PerplexityBot, and Claude-User (Anthropic).

A smart robots.txt strategy blocks training crawlers while allowing citation crawlers:

# Block AI training crawlers

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

User-agent: Google-Extended

Disallow: /

User-agent: CCBot

Disallow: /

# Allow AI search/citation crawlers

User-agent: ChatGPT-User

Allow: /

User-agent: OAI-SearchBot

Allow: /

User-agent: PerplexityBot

Allow: /

# Allow traditional search engines

User-agent: Googlebot

Allow: /

User-agent: Bingbot

Allow: /

This gives you the best of both worlds: your content appears in AI-generated answers with attribution, while preventing it from being absorbed into training datasets without credit.

Pair this with your llms.txt file, and you have a complete AI content strategy: block what you do not want, and guide what you do allow.

How to Implement llms.txt on Your Website

Implementation is straightforward regardless of your platform.

Step 1: Identify your key pages. List the 15–50 pages that best represent your business. Prioritise service pages, cornerstone blog content, FAQs, about/team pages, case studies, and pricing information. These are the pages you want AI to find when someone asks about your business.

Step 2: Create the file. Open any text editor and create a file called llms.txt using Markdown formatting. Follow the structure outlined earlier: H1 heading, blockquote summary, then H2 sections with linked page lists and brief descriptions.

Step 3: Upload to your root directory. Place the file at yoursite.com/llms.txt. On WordPress, you can use the Yoast SEO plugin (which now auto-generates the file) or the dedicated "Website LLMs.txt" plugin with over 30,000 installs. On custom platforms, upload via FTP or your hosting file manager.

Step 4: Verify accessibility. Check that the file loads correctly by visiting the URL directly. Use curl https://yoursite.com/llms.txt from a terminal to confirm it is publicly accessible and returns clean Markdown.

Step 5: Consider llms-full.txt. If your business has substantial content (documentation, extensive service descriptions, detailed guides), create a companion llms-full.txt that compiles your key content into a single Markdown file. This is especially valuable for businesses with technical documentation or complex service offerings.

Step 6: Review quarterly. Update the file whenever you publish significant new content, restructure your site, or change your service offerings. Stale links undermine the file's utility.

What Australian Businesses Should Do Now

The practical reality in February 2026 is that llms.txt is a low-cost, low-risk investment that positions your business for where AI search is heading. The implementation effort is minimal — a few hours at most — and there are no downsides.

If you are already doing AEO work, adding llms.txt is a natural extension. You have already identified your key content, built answer-first pages, and implemented schema markup. llms.txt simply packages that information into an additional format designed for AI consumption.

If you are on WordPress, enable llms.txt through Yoast SEO or install a dedicated plugin. Review the auto-generated file and curate it to highlight your most important pages rather than accepting the default.

If you have a custom-built site, create the file manually and add it to your deployment pipeline. If you are using Next.js, several community plugins handle generation automatically.

Regardless of platform, audit your robots.txt to ensure you are making deliberate decisions about training versus citation crawlers. The default "allow everything" approach means your content is being used to train AI models with no return to your business.

The window for establishing your AI visibility foundation — before the landscape becomes as competitive as traditional SEO — is still open. llms.txt is one more tool to claim it.

Frequently Asked Questions

Let's discuss your project

Ready to optimize your site structure for the AI era? Let's talk.